We have entered a new phase of AI adoption.

The novelty is gone. The tools are everywhere. The creative teams are using them whether you have a policy or not.

Now the real question shows up right before launch:

Do we label this?

An industry framework from IAB recently attempted to answer that. The guidance is simple on the surface. Disclose when AI materially shapes authenticity, identity, or representation in a way that could mislead a reasonable consumer.

That sounds clean.

It is not.

The Word Doing All the Work

Everything hinges on one word: material.

Material to whom?

If a designer uses AI to generate a starting composition that is later refined, composited, and approved by humans, is that materially different from traditional digital workflows?

If a background is generated but the product is real, what exactly is the consumer being misled about?

Most real-world creative is not fully synthetic. It’s assisted. Iterative. Hybrid.

That means the hard part is not spotting deepfakes. It is defining thresholds for blended work.

Once you introduce interpretation into a system, you introduce inconsistency.

Two Layers, One Risk

Most current thinking organizes this into two layers.

The first is infrastructure. Metadata embedded in the asset. A record of what tools were used and whether disclosure was evaluated.

The second is human judgment. Someone applies the threshold and decides whether the viewer needs to know.

That separation makes sense.

But metadata can be stripped. Platforms may apply their own labeling rules. Internal teams may apply the threshold differently.

Which means the real risk is not a missing badge. It is misalignment.

If one team labels every minor AI assist and another labels only fully synthetic imagery, you are not transparent. You are unpredictable.

And unpredictability erodes trust faster than almost anything.

This Is a Systems Question

It is tempting to treat AI disclosure as a creative toggle.

Add label. Do not add label.

But disclosure is downstream of policy.

It reflects how clearly you have defined material impact. How consistently your teams evaluate it. How well your workflow supports documentation.

Transparency is not a sparkle icon in the corner of an ad.

It is a decision architecture.

We built machines that can fabricate convincing reality in seconds. We are still building the systems that decide when to tell people.

That feels about right.

How We Chose to Handle It

At A&G, we realized pretty quickly that debating disclosure asset by asset was not sustainable.

If every campaign triggered a philosophical debate about what counts as material, we would stall the work. Worse, different teams would answer differently.

So we stopped asking “Should we label this?” as the starting point.

We started asking:

What system governs the decision?

Step One: Define Provenance Internally

Before worrying about consumer labels, we clarified something simpler.

Every AI-assisted asset must have documented provenance.

Not just “AI was used.” That is too vague to be useful.

We document:

- The tool used.

- The scope of AI contribution.

- Whether the AI output was a base layer, background element, voice, or structural starting point.

- Confirmation of human review and approval.

- Confirmation that no real individual was simulated without authorization.

- Confirmation that no copyrighted styles were replicated.

This shifts the burden from optics to accountability.

AI systems are tools. They are not authors. We retain responsibility for what ships.

That principle drives everything else.

Step Two: Separate Sandbox from Production

We also distinguish between exploratory work and production work.

Creative sandbox output can move fast. Ideas can be tested. Concepts can be generated.

But if a sandbox asset is shown externally, it is identified clearly as conceptual and not final.

Production work triggers stricter review.

That distinction matters.

It prevents over-policing ideation while protecting what actually goes into market.

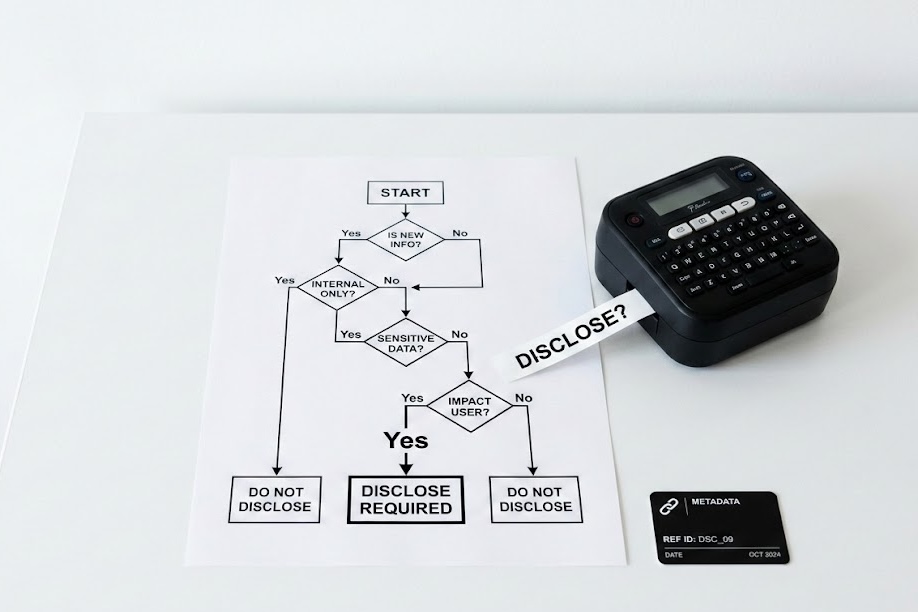

Step Three: Establish a Decision Tree

The real friction point was materiality.

So we built a workflow decision tree.

Not a legal memo. A practical flow.

Does the asset simulate a real person?

Is a synthetic human depicted in a primary role?

Is an AI-generated voice speaking words a person never said?

Is the imagery fully generated from a prompt in a way a reasonable consumer would assume is photographic?

If yes, disclosure is required.

These are not edge cases. They come up constantly.

If AI served as an assistive tool, analogous to traditional editing, and does not alter the core representation, disclosure is generally not required.

The point is not perfection.

The point is consistency.

Once the thresholds are defined in advance, you reduce the emotional debate at launch time.

Step Four: The System and Carrier Layer

Documentation lives inside our workflow.

We also think about how AI involvement is declared and preserved.

There are emerging specifications that embed provenance metadata inside digital files. In theory, this allows downstream verification and platform alignment.

In practice, metadata does not always survive distribution intact.

Which means internal documentation is not optional. It is primary.

We treat metadata as an infrastructure bonus, not as the backbone of compliance.

The backbone is policy-driven review and documented human accountability.

The Quiet Shift

The public conversation about AI disclosure often centers on icons and labels.

Our internal shift was more structural.

Instead of asking how loudly to declare AI use, we asked how defensible our decision process would be if questioned later.

That is a different posture.

It assumes the environment will evolve. Platform rules will change. Regulation will tighten. Public perception will swing.

A documented, repeatable, policy-led process travels better than a badge in the corner of an image.

We did not solve ambiguity by labeling everything.

We solved it by defining how decisions are made, documenting them, and applying them consistently.

Transparency is less about what the consumer sees and more about whether your organization can defend the system behind it.