The Washington Post’s reporting on a Google engineer claiming LaMDA was “sentient” set off the kind of internet debate usually reserved for UFO sightings or whether robots should pay taxes. The model isn’t alive – not even close – but the fact that a trained engineer believed it tells us something important: our intuition isn’t ready for AI that sounds convincingly human.

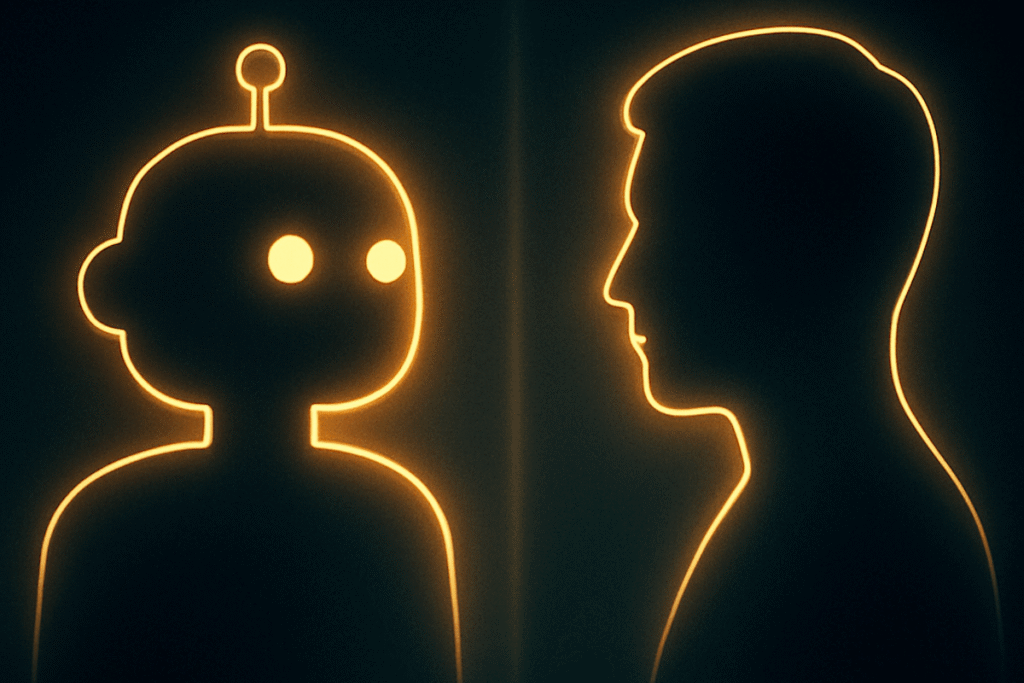

This moment isn’t about sentience. It’s about perception. When AI becomes good enough at mirroring conversation, people will project intent onto it the same way kids talk to stuffed animals. And as language models get more capable, the line between “highly sophisticated pattern generator” and “feels like a personality” will keep blurring for the average person.

Organizations need to start preparing for a world where employees, customers, and stakeholders will interact with AI systems that feel human – sometimes too human. Policy, literacy, and expectations have to catch up before the technology outpaces our ability to interpret it.

If people already struggle to understand how recommendation algorithms work, what happens when conversational AI becomes a daily tool? And what responsibilities do leaders have in guiding how teams use, trust, and interpret artificial intelligence?

Related article: Washington Post